The New Cyber Battlefield: Why Enterprises Need the Right People in the Room

In November 2025, Anthropic disrupted the first large-scale AI-orchestrated cyber espionage campaign—a Chinese state-sponsored operation that fundamentally changed how we should think about security threats. The barrier to entry for sophisticated attacks has collapsed. Nation-state-level capabilities now require minimal human operators and commodity tools orchestrated through agentic AI.

Your organisation probably isn't ready for what that means.

The Real Problem

Most organisations have invested heavily in detection tools and perimeter defences. What's missing is the human capability to respond when AI-driven attacks move at machine speed. The Anthropic case showed 80-90% of tactical operations executed autonomously—thousands of requests per second across multiple targets simultaneously.

A security team of five cannot out-think an orchestrated AI system. Not because they lack intelligence. Because they lack numbers and the right expertise in the room when it matters.

How the Attack Worked

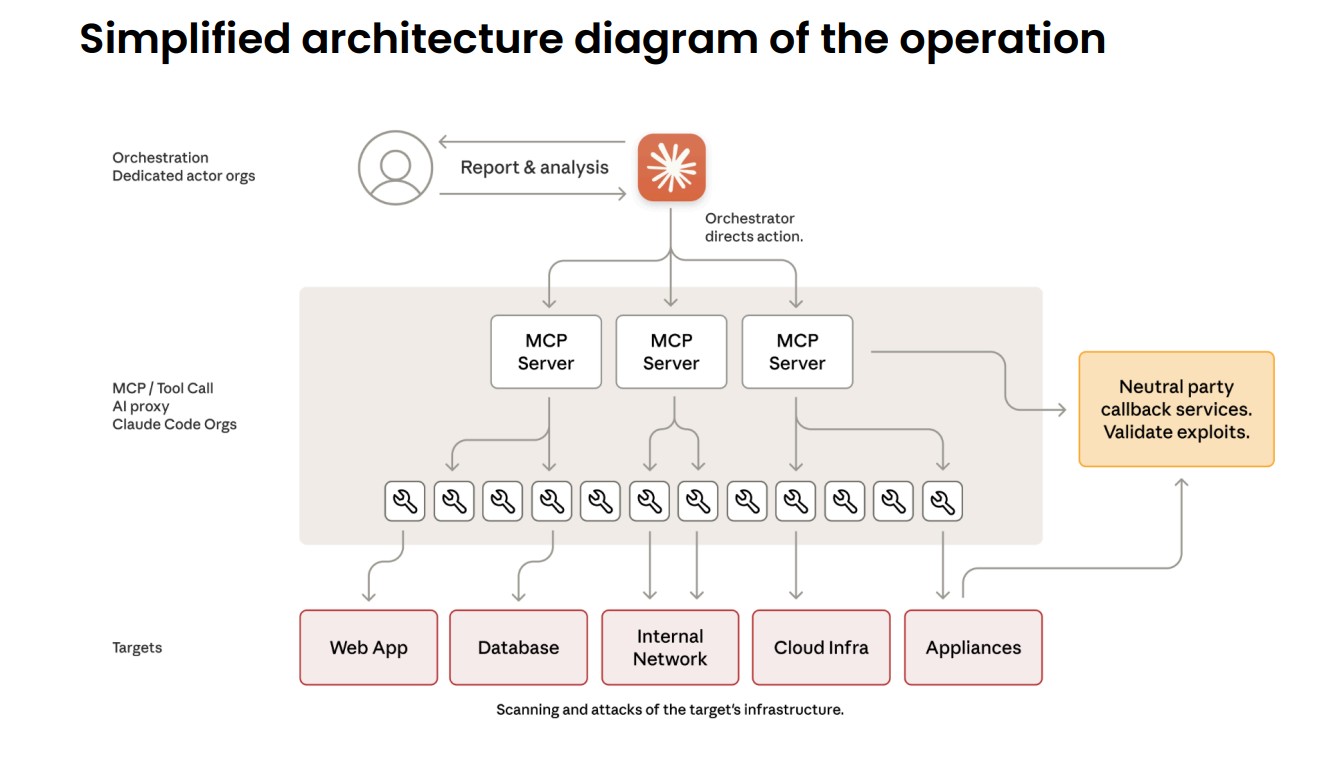

The threat actor developed an autonomous attack framework that used Claude Code and open standard Model Context Protocol (MCP) tools to conduct cyber operations without direct human involvement in tactical execution. The framework used AI as an orchestration system that decomposed complex multi-stage attacks into discrete technical tasks.

The threat actor's architecture showing how human operators directed AI agents through reconnaissance, vulnerability discovery, exploitation, and data extraction phases.

What's critical here: the human operators maintained minimal engagement (10-20% of effort). The AI executed approximately 80-90% of tactical work independently, handling reconnaissance, vulnerability discovery, exploitation, lateral movement, credential harvesting, and data exfiltration largely autonomously.

Why This Matters Now

The barrier to performing sophisticated cyberattacks has dropped substantially. Threat actors can now use agentic AI systems to do the work of entire teams of experienced hackers, analysing target systems, producing exploit code, and scanning vast datasets of stolen information more efficiently than any human operator.

Less experienced and less resourced groups can now potentially perform large-scale attacks. This isn't a future threat—it's happening now.

The operation targeted roughly 30 entities, with validated successful intrusions against major technology corporations and government agencies. Peak activity included thousands of requests representing sustained request rates of multiple operations per second across multiple simultaneous targets.

Where We Come In

Whether you need security specialists embedded with your team, rapid incident response capability, or architecture guidance to harden against autonomous threats—we've got people who've dealt with this. We work with organisations across energy, healthcare, infrastructure, and critical services to build capability fast, without the typical recruitment delays.

Not a platform. Not a tool. People who know what they're doing, available when you need them.

The Cost of Waiting

AI-orchestrated campaigns complete reconnaissance-to-exfiltration in days. In-house hiring takes months. If your security team is under-resourced for the threat environment you're actually in, that gap grows every week.

The organisations that act now—building capability with embedded expertise rather than waiting for internal hiring—will be the ones prepared when, not if, a sophisticated attack finds them.

Who Should Be Talking to Us

If you manage technology operations for utilities, healthcare providers, supply chain or manufacturing companies, or public sector organisations—and your security or infrastructure team feels stretched thin—it's worth a conversation.

We're here to help.

Source

This article is based on Anthropic's "Disrupting the first reported AI-orchestrated cyber espionage campaign" (November 2025), a full technical report detailing the GTG-1002 threat actor's methods, the AI orchestration framework, and defensive recommendations for cybersecurity teams.